Hard to tell from that video what is going on but with 16-bit data the I2S frame will be half empty. Input LRCK to scope channel 1 and SD to channel 2 and view single pulses triggered by LRCK. If you repeat that a few times you should be able to see whether or not data is the same in every sample.

It is working now! 192 kHz @ 32 bits - pure sound! The reason of strange SAI behaviour was Data Cache. Just disabled Data Cache and sound distortions desapeared! SAI DMA is used in normal mode.

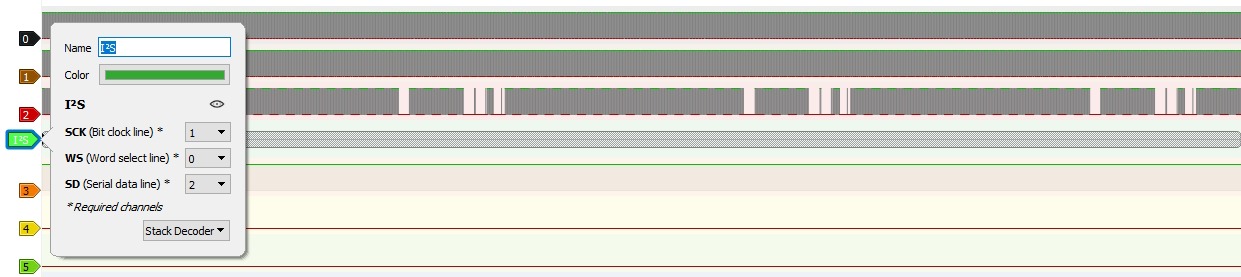

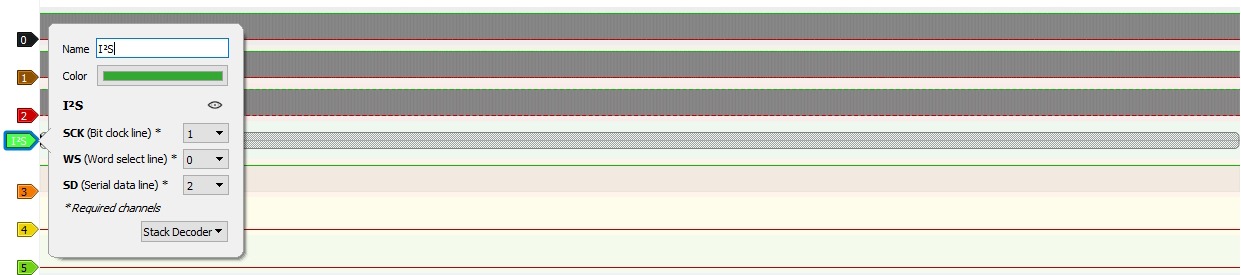

For those who may be interested in SAI behaviour with Data Cache enabled / disabled, what I tried explaining in the previous post, here are diagrams received by means of a logic analyzer.

1) Data Cache enabled. It is seen that data (line 2) are lost periodically

2) Data Cache disabled. It is seen that data are never lost, transmitted permanently.

2) Data Cache disabled. It is seen that data are never lost, transmitted permanently.

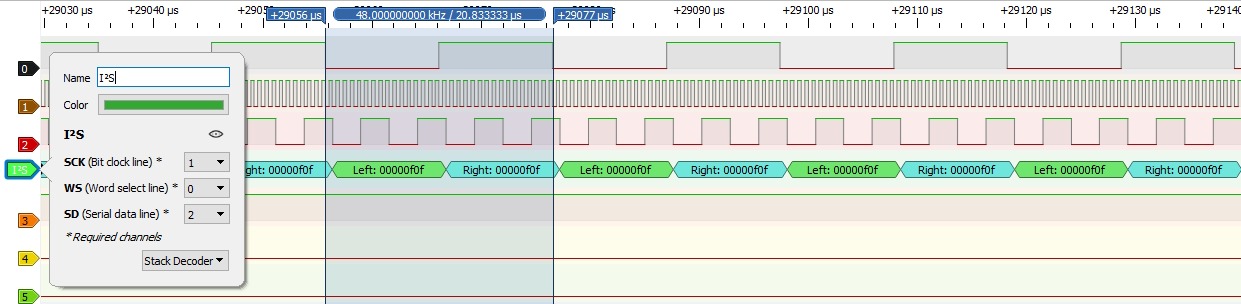

3) Data Cache disabled, previous diagram is zoomed in, test data 0x0F are transmitted.

3) Data Cache disabled, previous diagram is zoomed in, test data 0x0F are transmitted.

@bohrok2610, thank you very much for your time and support! You helped me a lot!

For those who may be interested in SAI behaviour with Data Cache enabled / disabled, what I tried explaining in the previous post, here are diagrams received by means of a logic analyzer.

1) Data Cache enabled. It is seen that data (line 2) are lost periodically

@bohrok2610, thank you very much for your time and support! You helped me a lot!

D-Cache and DMA is terrible in STM32F7/H7.The reason of strange SAI behaviour was Data Cache.

As disable D-Cache at all is not a good idea for other firmware parts, it is better to make the separate RAM area for DMA buffers, and define it with MPU as "non cacheable", while other RAM remains cacheable.

Nice to hear that it is working!It is working now! 192 kHz @ 32 bits - pure sound! The reason of strange SAI behaviour was Data Cache.

This D-cache issue was already discussed in another thread https://www.diyaudio.com/community/...bit-stereo-balanced-input.390996/post-7162632 (post #89 onwards).

I have D-cache enabled and have separate RAM for DMA buffers using MPU but in my case I did not see any issues even with D-cache disabled.

@bohrok2610, how do you play 384 kHz / 768 kHz audio? I have not managed to do that yet. Windows lists 384 kHz in audio device settings but when I choose for example 384 kHz @ 32 bit (or 384 kHz @ 24 bit) and then click play button in a player, player seems to be playing audio but via debugger I can see that no data are transmitted to audio device from PC.

I mean: what player do you use?

I mean: what player do you use?

In Win10 wasapi exclusive played any samplerate my linux UAC2 gadget was configured to support/report, tested up to 4MHz https://www.diyaudio.com/community/threads/linux-usb-audio-gadget-rpi4-otg.342070/post-6725714 . IIRC foobar2000 + the wasapi exclusive plugin played any-samplerate wav/flac too (generated e.g. with sox).

Now 384k/32 stream is working. 1) Forgot that maximum endpoint size was limited by value for 192k/32 stream, that is why no data were transmitted to the device from PC. 2) Had to use SAI DMA in circular double buffer mode.

Now going to test the device with Linux.

Now going to test the device with Linux.

Hi,

If interested I have a working example of uac 2.0 (192khz&96khz) from ST, never released so go MP.

If interested I have a working example of uac 2.0 (192khz&96khz) from ST, never released so go MP.

@__BricKs__

I'm also migrating from uac1.0 to uac2.0, could you post a working example, unfortunately I'm a new member and can't send PM, please PM me first.

I'm also migrating from uac1.0 to uac2.0, could you post a working example, unfortunately I'm a new member and can't send PM, please PM me first.

I didn't get an answer, but I managed on my own and

uac 2.0 done, right now my code is compatible with both uac1.0 and uac2.0.

The result of my work can be seen at

uac 2.0 done, right now my code is compatible with both uac1.0 and uac2.0.

The result of my work can be seen at

I had a look at the different readme information and key file headers. Most examples are related to 2 channels/stereo. I haven't seen examples/projects related to UAC2 multichannel features. So from this quick look, it is unfortunatly not demonstrated that USB multichannel audio would be easily achievable on the stm32 MCU.Hi,

If interested I have a working example of uac 2.0 (192khz&96khz) from ST, never released so go MP.

The NXP IMXRT10xx range seems to be in a better position there. I downloaded their SDR, and looking at their examples, thay have some USB multichannel ones (5.1).

I really appreciate the stm32 and their Nucleo boards. We just miss a good USB UAC2.0 stack.

I have seen the tinyUSB and CherryUSB codes, but I don't know how mature they are for that application.

I do not know how what data layout stm32 requires for multichannel SAI, but in linux USB audio gadget the number of channels is just a parameter, used on a few places. The blocks of interleaved samples delivered by each USB packet are just copied to the output buffer. Sending more channels just means receiving a longer block of bytes.

There should be no problems in using STM32 SAI for UAC2 multichannel audio. E.g. STM32F723 has 2 SAIs which can be used for 8 channel audio. STM32H7s have 4 SAIs so up to 16 channel audio is possible.

This is basically how it works with STM32 as well (and probably with any other MCU). But as each SAI in STM32 caters for 2 channels multichannel audio samples requires more than one output buffer. E.g. 5.1 audio would require 3 buffers.The blocks of interleaved samples delivered by each USB packet are just copied to the output buffer.

In addition to I2S STM32F7&H7 SAIs support TDM-like protocols which could be used for up to 16-channels but I haven't tried TDM myself.

Hi, Since 2015 I have identified all the potential of those SAI. They can also get a dedicated Cristal for the audio clock, to get clean 44.1k and 48k.

But it took m monthes to achieve a USB asynchronous mode, as it was not covered by the stm32 ST code, despite on the paper it was 100% feasible. Good Hardware, imcomplete software... For my part I won't dig in a new uncertain aventure here with my limited knowledge of USB standard and coding skills. I'm just good at adjusting almost good examples and integrating some ;-)

But NXP has the UAC2 stack. Could be easier to switch to those...

The fact that ST does not releases a UAC2 official stack is a no go for me unfortunatly...

JM

But it took m monthes to achieve a USB asynchronous mode, as it was not covered by the stm32 ST code, despite on the paper it was 100% feasible. Good Hardware, imcomplete software... For my part I won't dig in a new uncertain aventure here with my limited knowledge of USB standard and coding skills. I'm just good at adjusting almost good examples and integrating some ;-)

But NXP has the UAC2 stack. Could be easier to switch to those...

The fact that ST does not releases a UAC2 official stack is a no go for me unfortunatly...

JM

- Home

- Source & Line

- Digital Line Level

- UAC2.0 on STM32