Hi,

you all know that sound localisation relates mainly on interaural differences : level (ILD) and time (ITD).

Those localisation cues apply for real sources but also for phantom sources generated by two loudspeakers in stereo configuration.

To "improve" monophonic sound, some researchers proposed pseudostereophonic systems, mainly with assymetrical all-pass filters on left and right, widening the stereo image and increase of apparent source width.

On the opposite, better loudspeaker and room symmetry (phase, level and frequency matching, toe-in,....), should improve perceived localisation : better source accuracy and stability, less blur.

Here comes my question : how can we objectively measure subjective localisation ?

I'm not searching for a complete auditory model to estimate true source position but rather for an indicator to compare localisation from various loudspeakers in rooms.

In the huge litterature on auditory localisation, I found only a few showing objective measurement of a phantom source :

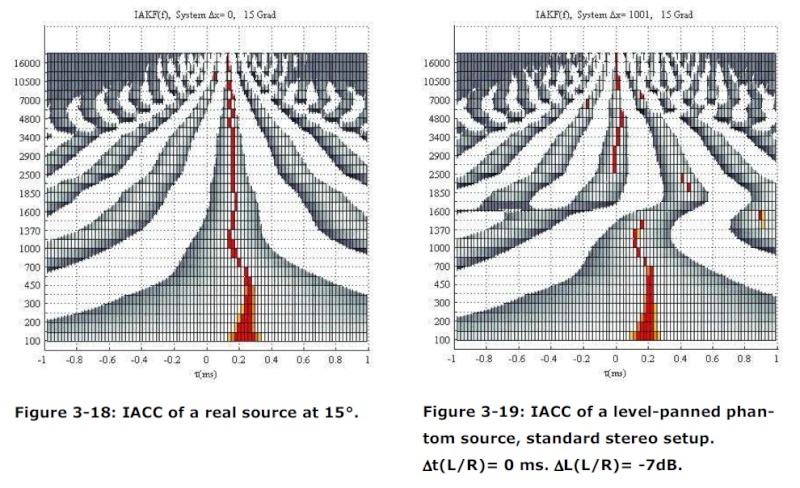

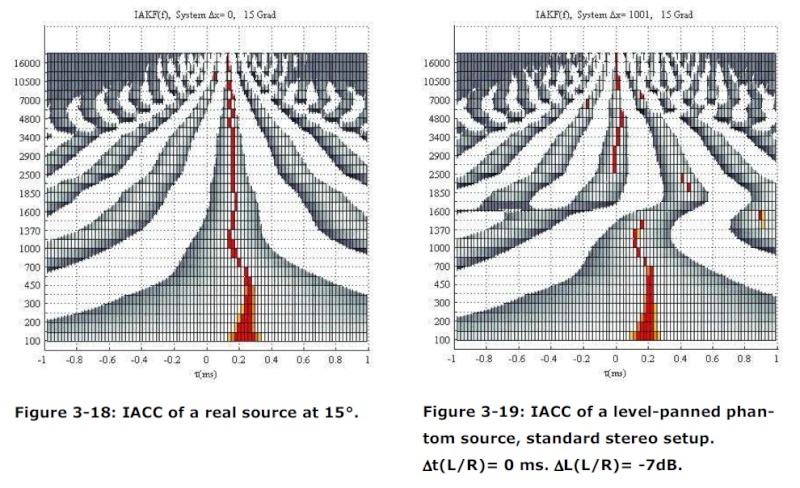

- Perceptual differences between wavefield synthesis and stereophony by Helmut Wittek, see page 42 : frequency dependant IACC measurement http://www.google.fr/url?sa=t&rct=j&q=&esrc=s&source=web&cd=1&cad=rja&ved=0CDcQFjAA&url=http%3A%2F%2Fhauptmikrofon.de%2FHW%2FWittek_thesis_201207.pdf&ei=RkBbUevHDsPYPZKngeAF&usg=AFQjCNEXr2ID8F7j0agoBUkIVtPaKJnHtA&bvm=bv.44697112,d.ZWU

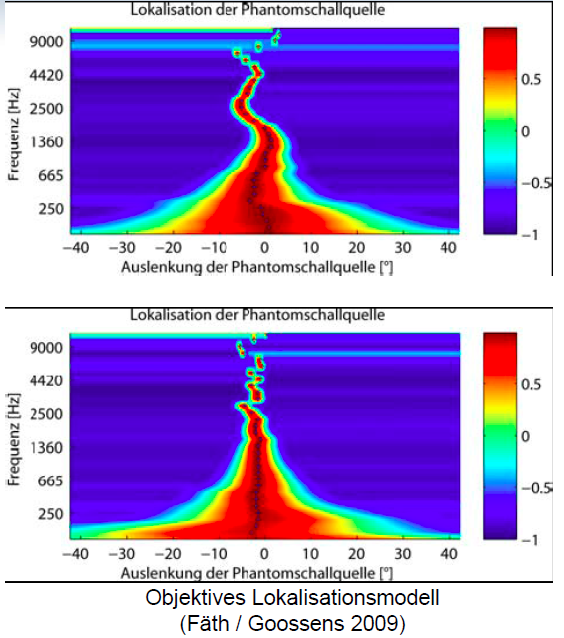

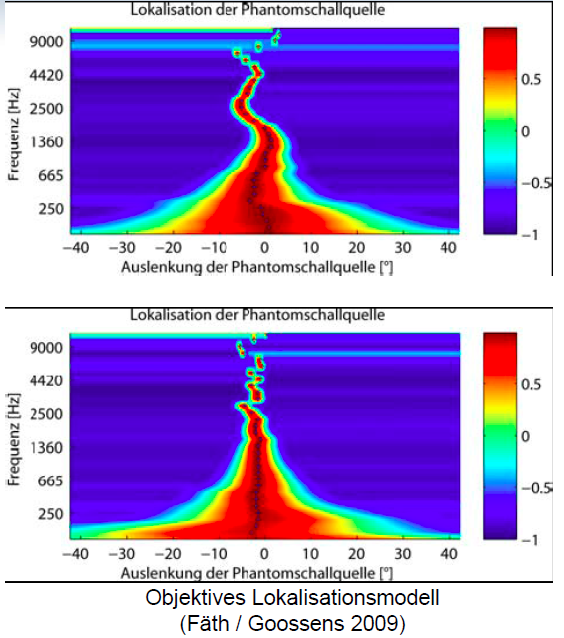

- Moglichkeiten und Grenzen der elektronishen Raumkorrektur bei Lautsprecherwiedergabe, Sebastian Goossens und Christian Gutmann, see page 15 : measure of groupdelay in lower frequencies, level differences at higher frequencies http://www.tonmeister.de/vdt/webdownloads/Seminare/2011/Sebastian_Goossens_VDT_Abhoerraeume_elektronisch_korrigieren_Nuernberg_2011.pdf

From measured impulse responses, we can compute frequency related ILD and ITD and estimate perception of a "theoretically central" phantom source :

- azimuth angle : position, accuracy, stability

- source focus (no localization blur)

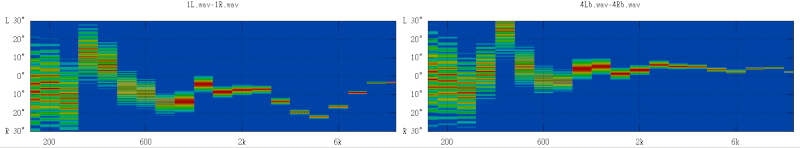

So I did a simple software to estimate localisation position computing ILD and ITD from IR measurements.

This soft will be free (but works on windows only) and is not yet finished (need just a few days more). So I'm happy if I can get some good suggestions here (and if some want to debug it, tell me)

This soft has a nice feature, the calculation is open : a GNU Octave script that everybody can modify and improve.

For now, the script does the following :

- frequency dependant windowing of the IR

- separate spectrum in 30 bands with a FIR filter

- calculate L/R IACC and level difference in each band

- compute localisation from both IACC and level (time intensity trading/adding)

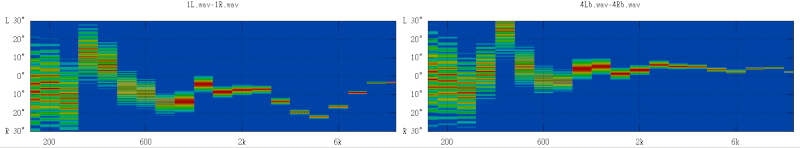

First look of results :

A few remaining questions :

- can we really avoid measuring with an artificial head and simply work with L and R impulse responses ?

- should we use a frequency dependant time widow ? a longer window ?

- does the calculated IACC represent the real ITD over the whole spectrum ?

- is IACC + level enough or are there other parameters to add such as HF signal enveloppe, HRTF, binaural crosstalk,... ?

- what frequency filtering should be used : gammatone, 1/6 octave,...

- how should ITD be calculated ? IACC, direct phase, groupdelay, envelope of signal ?

- time/angle and level/angle are now linearly approximated : should this be changed ?

- should we measure over a whole listening area to check stability of localisation ?

- should we also measure with non central phantom sources ?

- and the most important, does this analysis correlate to perception ? how to precisely check it ?

you all know that sound localisation relates mainly on interaural differences : level (ILD) and time (ITD).

Those localisation cues apply for real sources but also for phantom sources generated by two loudspeakers in stereo configuration.

To "improve" monophonic sound, some researchers proposed pseudostereophonic systems, mainly with assymetrical all-pass filters on left and right, widening the stereo image and increase of apparent source width.

On the opposite, better loudspeaker and room symmetry (phase, level and frequency matching, toe-in,....), should improve perceived localisation : better source accuracy and stability, less blur.

Here comes my question : how can we objectively measure subjective localisation ?

I'm not searching for a complete auditory model to estimate true source position but rather for an indicator to compare localisation from various loudspeakers in rooms.

In the huge litterature on auditory localisation, I found only a few showing objective measurement of a phantom source :

- Perceptual differences between wavefield synthesis and stereophony by Helmut Wittek, see page 42 : frequency dependant IACC measurement http://www.google.fr/url?sa=t&rct=j&q=&esrc=s&source=web&cd=1&cad=rja&ved=0CDcQFjAA&url=http%3A%2F%2Fhauptmikrofon.de%2FHW%2FWittek_thesis_201207.pdf&ei=RkBbUevHDsPYPZKngeAF&usg=AFQjCNEXr2ID8F7j0agoBUkIVtPaKJnHtA&bvm=bv.44697112,d.ZWU

- Moglichkeiten und Grenzen der elektronishen Raumkorrektur bei Lautsprecherwiedergabe, Sebastian Goossens und Christian Gutmann, see page 15 : measure of groupdelay in lower frequencies, level differences at higher frequencies http://www.tonmeister.de/vdt/webdownloads/Seminare/2011/Sebastian_Goossens_VDT_Abhoerraeume_elektronisch_korrigieren_Nuernberg_2011.pdf

From measured impulse responses, we can compute frequency related ILD and ITD and estimate perception of a "theoretically central" phantom source :

- azimuth angle : position, accuracy, stability

- source focus (no localization blur)

So I did a simple software to estimate localisation position computing ILD and ITD from IR measurements.

This soft will be free (but works on windows only) and is not yet finished (need just a few days more). So I'm happy if I can get some good suggestions here (and if some want to debug it, tell me)

This soft has a nice feature, the calculation is open : a GNU Octave script that everybody can modify and improve.

For now, the script does the following :

- frequency dependant windowing of the IR

- separate spectrum in 30 bands with a FIR filter

- calculate L/R IACC and level difference in each band

- compute localisation from both IACC and level (time intensity trading/adding)

First look of results :

A few remaining questions :

- can we really avoid measuring with an artificial head and simply work with L and R impulse responses ?

- should we use a frequency dependant time widow ? a longer window ?

- does the calculated IACC represent the real ITD over the whole spectrum ?

- is IACC + level enough or are there other parameters to add such as HF signal enveloppe, HRTF, binaural crosstalk,... ?

- what frequency filtering should be used : gammatone, 1/6 octave,...

- how should ITD be calculated ? IACC, direct phase, groupdelay, envelope of signal ?

- time/angle and level/angle are now linearly approximated : should this be changed ?

- should we measure over a whole listening area to check stability of localisation ?

- should we also measure with non central phantom sources ?

- and the most important, does this analysis correlate to perception ? how to precisely check it ?

With the help of imaginary books 😉Can you measure the length of an imaginary string?

Spatial Hearing - The Psychophysics of Human Sound Localization: Jens Blauert

Anyway, here is an imaginary download page : blogohl: POPS Position Of Phantom Source

First results are encouraging (well, only for me).

Last edited:

That's the most interesting question for me. Can we make your software closely relate to what people hear?- and the most important, does this analysis correlate to perception ? how to precisely check it ?

I'm game to test it, I have access to many speakers and many rooms. We just need to figure out some test procedures. I'll leave the heavy lifting (math and code) to you. I'll just test, measure and report. 🙂

Yes. Send me twenty imaginary dollars and I'll send you a 25' imaginary tape measure.Can you measure the length of an imaginary string?

But it is, I think, a serious question that deserves more than a flippant response. We all talk about "imaging" and "localization" and even (some of us) "auditory scene" . . . but always in the most subjective of terms. There is no metric (that I know of) to quantify the "experience" in a way that would enable reasonable comparisons . . . not even a simple "81% of test subjects correctly placed the flute to the left of the oboe and both left of center". And we rarely distinguish, in our discussions, between the accurate reproduction/representation of a real scene and the synthetic creations of studio recordings or movie sound tracks.

Perhaps it's just hopeless . . . not enough people seem to care.

Thanks, I would be especially interested about results for high directivity speakers : does the phantom image stay more central than with low directivity speakers ?I have access to many speakers and many rooms. I'll just test, measure and report. 🙂

Here I am all with dewardh. 🙂We all talk about "imaging" and "localization" and even (some of us) "auditory scene" . . . but always in the most subjective of terms. There is no metric (that I know of) to quantify the "experience" in a way that would enable reasonable comparisons . . . not even a simple "81% of test subjects correctly placed the flute to the left of the oboe and both left of center".

I believe we must start with a lower level of precision than localization angles. In my place I can deliberately change those angles by changing the proportions of the stereo triangle or the degree of wall absorption. We would have to agree on exactly the same room situations to come to equal/comparable terms.

How about instead testing the degree of resolution first? Which different instruments/sources can we separate spatially in an "auditory scene" (Yes, I'm one of those using that term 😉)?

My experience is, that percussion instruments are quite easy to keep apart. For a first and very basic start and to keep us from infringing copyrights on musical content we could start at this adress:

video

We would start "Video 3" on that page, move forward to 2:55 min and play the "Black kit". And after listening we tell us, how many different locations we can identify for the parts of the drum kit and how they are distributed.

Would this be a feasible way to compare?

Rudolf

Last edited:

Would this be a feasible way to compare?

Don't think this would work well. Once you know how something should sound, it will. I've tricked myself many times with the barrier. When the barrier was removed, spatial quality didn't degrade. But when I listened the next day, starting without the barrier, spatial quality was clearly degraded. It seems the brain holds on to a certain "processing" for some time.

My experience says "No". But that's in large spaces where I've heard omnis do a very solid central image. Will be interesting to test wide vs narrow vs room size....does the phantom image stay more central than with low directivity speakers ?

Hi jlo,

You may find useful techniques for localization estimates in the doctor's thesis "Binaural localization and separation techniques" by Harald Viste. It is available for download Binaural localization and separation techniques

One main finding is that better estimates are available by considering the ITD and ILD jointly in each frequency band. Angle estimates from ILD tend to be noisy while estimates from ITD have ambiguity above 1.5 KHz. The noisy ILD estimates can be then used to select the right angle estimate from ITD.

Relevance to phantom source localization? Hard to say, but worth a try at least? The above operates with binaural signals, of course, necessitating some sort of model head or mics in your ears. The signals are analyzed by a simplified head model, ie. you don't need real HRTF data for useful results.

You may find useful techniques for localization estimates in the doctor's thesis "Binaural localization and separation techniques" by Harald Viste. It is available for download Binaural localization and separation techniques

One main finding is that better estimates are available by considering the ITD and ILD jointly in each frequency band. Angle estimates from ILD tend to be noisy while estimates from ITD have ambiguity above 1.5 KHz. The noisy ILD estimates can be then used to select the right angle estimate from ITD.

Relevance to phantom source localization? Hard to say, but worth a try at least? The above operates with binaural signals, of course, necessitating some sort of model head or mics in your ears. The signals are analyzed by a simplified head model, ie. you don't need real HRTF data for useful results.

When the barrier was removed, spatial quality didn't degrade. But when I listened the next day, starting without the barrier, spatial quality was clearly degraded. It seems the brain holds on to a certain "processing" for some time.

I find this to be the case when I listen to music with headphones right after a TV show or movie on my projector. The sound sticks to the screen.

I would to exploit more visual clues in a stereo setup. They make such a crazy big difference. Just the appearance of a center speaker helps to center the phantom image for me.

I think that's nine tenths of the reason we hear reports of "better" imaging with multi-channel HT systems . . .The sound sticks to the screen . . . I would to exploit more visual clues in a stereo setup

to measure the localization, consider the simple..

one speaker in each corner of the room with the microphone in the middle (time delay to offset the microphone too).

turn one speaker off and then half of the room can fill up with decay in front of the microphone

turn both speakers on and the decay in front of the microphone would go down.

if it doesn't then your window (or gate?) isn't shallow enough and the decay from behind the microphone is coming back up front to be recorded by the microphone.

you'd also be able to note a stronger amplitude in frequencies, especially the summed frequencies between the two speakers where normally dips and peaks would occur.

within that context, the size of the room will equate the peaks and dips .. but the thing to note is the rise in amplitude when no equalizer adjustment has been made, in comparison to a volume increase across the entire frequency band.

one speaker in each corner of the room with the microphone in the middle (time delay to offset the microphone too).

turn one speaker off and then half of the room can fill up with decay in front of the microphone

turn both speakers on and the decay in front of the microphone would go down.

if it doesn't then your window (or gate?) isn't shallow enough and the decay from behind the microphone is coming back up front to be recorded by the microphone.

you'd also be able to note a stronger amplitude in frequencies, especially the summed frequencies between the two speakers where normally dips and peaks would occur.

within that context, the size of the room will equate the peaks and dips .. but the thing to note is the rise in amplitude when no equalizer adjustment has been made, in comparison to a volume increase across the entire frequency band.

quite the gamble without adjustable window and|or gate, as well as the concept of the room itself interacting with the recorded measurement when things are shallow enough to pick up only what is in front of the microphone.

I think that's nine tenths of the reason we hear reports of "better" imaging with multi-channel HT systems . . .

I don't think this is it. I think the fact that you rely less on phantom imaging is the key. A actual speaker outputting information is much better than a phantom image. It is certainly much more stable that is for sure.

I think this is highly interesting subject ! A few years back I did something very similar, with Octave too 🙂

This was also my goal, but I concentrated on ITD. I tried to see through analysis how speaker directivity affects in ITD in a small room.

Plenty of good points there. You really have given a thought on these matters 🙂

Here's some my thoughts:

-If you plan to analyse ILD you really should have a head diffraction model.

-I used a wavelet transform so time windowing was not an issue but rather a built in feature. My focus was to include room reflections to see their effect on ITD, not to filter them out.

-The wavelet was matched to model cochlear frequency filtering. I used ERB to define bandwidth. Gammatones are fine too, I've used them occasionally as well.

-I calculated ITD from the phases of the interaural wavelet transform. It's straigth forward and very simple.

As said it was few years ago since my last analysis. I had great plans but was limited in available time 😀

- Elias

an indicator to compare localisation from various loudspeakers in rooms.

This was also my goal, but I concentrated on ITD. I tried to see through analysis how speaker directivity affects in ITD in a small room.

A few remaining questions :

- can we really avoid measuring with an artificial head and simply work with L and R impulse responses ?

- should we use a frequency dependant time widow ? a longer window ?

- does the calculated IACC represent the real ITD over the whole spectrum ?

- is IACC + level enough or are there other parameters to add such as HF signal enveloppe, HRTF, binaural crosstalk,... ?

- what frequency filtering should be used : gammatone, 1/6 octave,...

- how should ITD be calculated ? IACC, direct phase, groupdelay, envelope of signal ?

- time/angle and level/angle are now linearly approximated : should this be changed ?

- should we measure over a whole listening area to check stability of localisation ?

- should we also measure with non central phantom sources ?

- and the most important, does this analysis correlate to perception ? how to precisely check it ?

Plenty of good points there. You really have given a thought on these matters 🙂

Here's some my thoughts:

-If you plan to analyse ILD you really should have a head diffraction model.

-I used a wavelet transform so time windowing was not an issue but rather a built in feature. My focus was to include room reflections to see their effect on ITD, not to filter them out.

-The wavelet was matched to model cochlear frequency filtering. I used ERB to define bandwidth. Gammatones are fine too, I've used them occasionally as well.

-I calculated ITD from the phases of the interaural wavelet transform. It's straigth forward and very simple.

As said it was few years ago since my last analysis. I had great plans but was limited in available time 😀

- Elias

Hi

Just a thought;

As there can be a considerable difference between two differently designed pairs of loudspeakers in their ability to produce a mono phantom image and also suppress the R and L origins (even outside of the rooms effects), it might be useful to first use a single source in your rooms center and measure to see what a real mono source looks like (using your metric).

Also, our hearing sensitivity curve is a pretty large clue as to where our acuity is as well, so a small full range driver and voice range test signals may be worth trying in addition wideband.

Fwiw, Pat Brown (Synaudcon) uses B-format microphones to derive multichannel room impulse responses and has written some on the topic of capturing stereo in general, this on accuracy or realism.

Accuracy vs Realism Synergetic Audio Concepts

These may be of general interest too.

http://www.mmad.info/Collected Papers/Multichannel/24th ICP Banff 2003 Paper (16 pages).PDF

http://www.2010.simpar.org/ws/sites/DSR2010/09-DSR.pdf

Best,

Tom

Just a thought;

As there can be a considerable difference between two differently designed pairs of loudspeakers in their ability to produce a mono phantom image and also suppress the R and L origins (even outside of the rooms effects), it might be useful to first use a single source in your rooms center and measure to see what a real mono source looks like (using your metric).

Also, our hearing sensitivity curve is a pretty large clue as to where our acuity is as well, so a small full range driver and voice range test signals may be worth trying in addition wideband.

Fwiw, Pat Brown (Synaudcon) uses B-format microphones to derive multichannel room impulse responses and has written some on the topic of capturing stereo in general, this on accuracy or realism.

Accuracy vs Realism Synergetic Audio Concepts

These may be of general interest too.

http://www.mmad.info/Collected Papers/Multichannel/24th ICP Banff 2003 Paper (16 pages).PDF

http://www.2010.simpar.org/ws/sites/DSR2010/09-DSR.pdf

Best,

Tom

there can be a considerable difference between two differently designed pairs of loudspeakers in their ability to produce a mono phantom image and also suppress the R and L origins

Hi Tom,

Has that effect ever been investigated?

- Status

- Not open for further replies.

- Home

- Loudspeakers

- Multi-Way

- Measurement of phantom source localisation